Students and learners

Turn ideas into working devices with a practical stack and clear workflows. Learn by building real AI+IoT products, not toy examples.

Build once, deploy across chips.

TuyaOpen powers next-gen AI-agent hardware: it supports gear (Tuya T-Series Wi-Fi/BT MCUs, Pi, ESP32s) via a flexible cross-platform C/C++ SDK, pairs with Tuya Cloud multimodal AI, integrates top models (ChatGPT, Gemini, Qwen, Doubao, and more), and streamlines open AI-IoT ecosystem building.

git clone https://github.com/tuya/TuyaOpen.git

cd TuyaOpen

. ./export.sh

tos.py check

cd apps/tuya_cloud/switch_demo

tos.py config choice

tos.py build

tos.py flashLayered SDK, multimodal AI, and cloud-ready building blocks.

TKL (hardware abstraction), TAL (OS/device abstraction), libraries, services, and applications—develop once, deploy everywhere with reusable building blocks.

Speech (ASR, KWS, TTS, STT), vision, and sensor-based features. Integrate leading LLMs and platforms (DeepSeek, ChatGPT, Claude, Gemini, and more).

Connect to Tuya Cloud for remote control, monitoring, and OTA updates. Built-in security, device authentication, and data encryption.

Production-ready architecture from day one: reusable layers, stable connectivity, security-by-design, and scalable cloud integration so teams can move from proof-of-concept to shipped products with less rework.

From first prototype to scaled shipment, TuyaOpen helps you build bold IoT and agentic hardware faster.

Turn ideas into working devices with a practical stack and clear workflows. Learn by building real AI+IoT products, not toy examples.

Move from hackathon concepts to polished demos quickly. Reusable SDK layers and ready integrations help you ship cool hardware with less glue code.

Design voice-first, multimodal products with agent workflows, tools, and cloud orchestration that map cleanly to real devices.

Adopt a production-oriented architecture with security, OTA, and scalable cloud capabilities to reduce risk and speed time-to-market.

We built a TuyaOpen SDK Expert Skill with deep know-how for real hardware—optimized for VibeCoding. It guides you from new project setup and device authentication through build, flash, debug, and injected event testing, with a hardware-in-the-loop mindset so shipping connected AI device hardware is faster and easier.

VibeCoding narrows SDK and peripherals, forks a template, wires DP and CLI hooks, then compiles and flashes a binary to hardware—the create, build, and flash lane in the demo.

Provision device identity, open the monitor stream, attach Wi-Fi, bind to Tuya Cloud, and push DP telemetry—the auth credentials and live cloud path you see in the log.

Read UART, I2C, and sensor output in real time, then inject hardware CLI commands to force reads and connectivity checks—debugging plus scripted hardware tests on the board.

Write once, target every chip class—from embedded MCUs to application SoCs—with one straightforward workflow. Develop on Windows, macOS, or Linux: the same tos.py toolchain on the OS you already use. Clone the repo, run export at the root to bring the SDK online, then follow the commands below. Host prerequisites and Windows export scripts: Environment setup.

git clone https://github.com/tuya/TuyaOpen.git

cd TuyaOpen

. ./export.sh

tos.py check

cd apps/tuya_cloud/switch_demo

tos.py config choice

tos.py build

tos.py flashGet a TuyaOpen dedicated license (UUID + AuthKey) for Tuya Cloud access. See Get Started for acquisition and writing options.

Clone TuyaOpen from GitHub or Gitee, cd into the repo, and run the export script (. ./export.sh on Linux/macOS, or export.ps1 / export.bat on Windows) to initialize the tos.py environment. Run tos.py check, then in your app folder use tos.py config choice for the board.

From the application directory, run tos.py build and tos.py flash. Use tos.py monitor when you need serial logs or authorization steps.

On the edge you ship firmware with the TuyaOpen C/C++ SDK; in the cloud you use the Tuya Cloud developer platform for multimodal AI. Text, speech, vision, and sensor data move between device and cloud so you can build next-gen AI-agent hardware and open smart ecosystems.

From coin-cell MCUs to Linux-class SoCs—pick the silicon that matches your power budget, latency goals, and AI topology. Host tooling on Windows, macOS, and Linux. See About TuyaOpen for the full matrix.

Ultra-lightweight, low-cost, and power-efficient—built for always-on IoT. Stream sensor and media data to Tuya Cloud; multimodal AI is processed in the cloud so your device stays lean.

More headroom for edge AI: run richer models locally, then fuse with Tuya Cloud AI for scale, orchestration, and the latest LLMs—one stack from device to cloud.

Start from production-oriented demos. Pick a target, open the source, and follow the guide to run it on your board in minutes.

Baseline IoT firmware for networking, pairing, cloud control, and OTA across supported targets. A simple and straightforward IoT on/off example with a controllable app panel.

Voice-first AI chat demo with agent interaction, tuned for T5AI, ESP32-S3, and Raspberry Pi 4/5 class devices. Supports multimodal input and skill extension, ideal for personal assistants and conversational experiences.

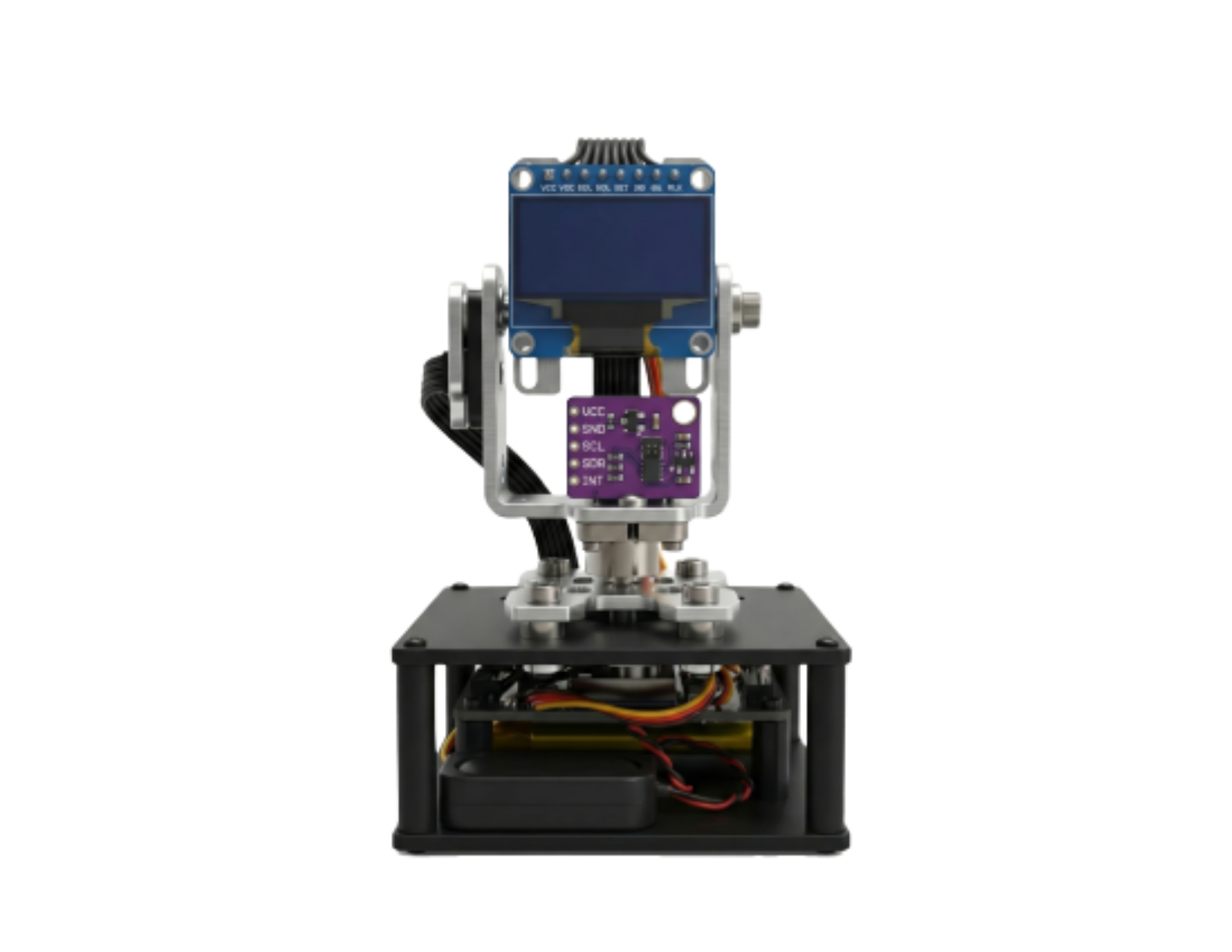

Dual-eye expressive demo with eye and mood visuals, plus conversational interaction on T5AI.

From hardware protocols and OS-level programming to LVGL GUI and generic examples, this is a practical building-block set to get started fast.

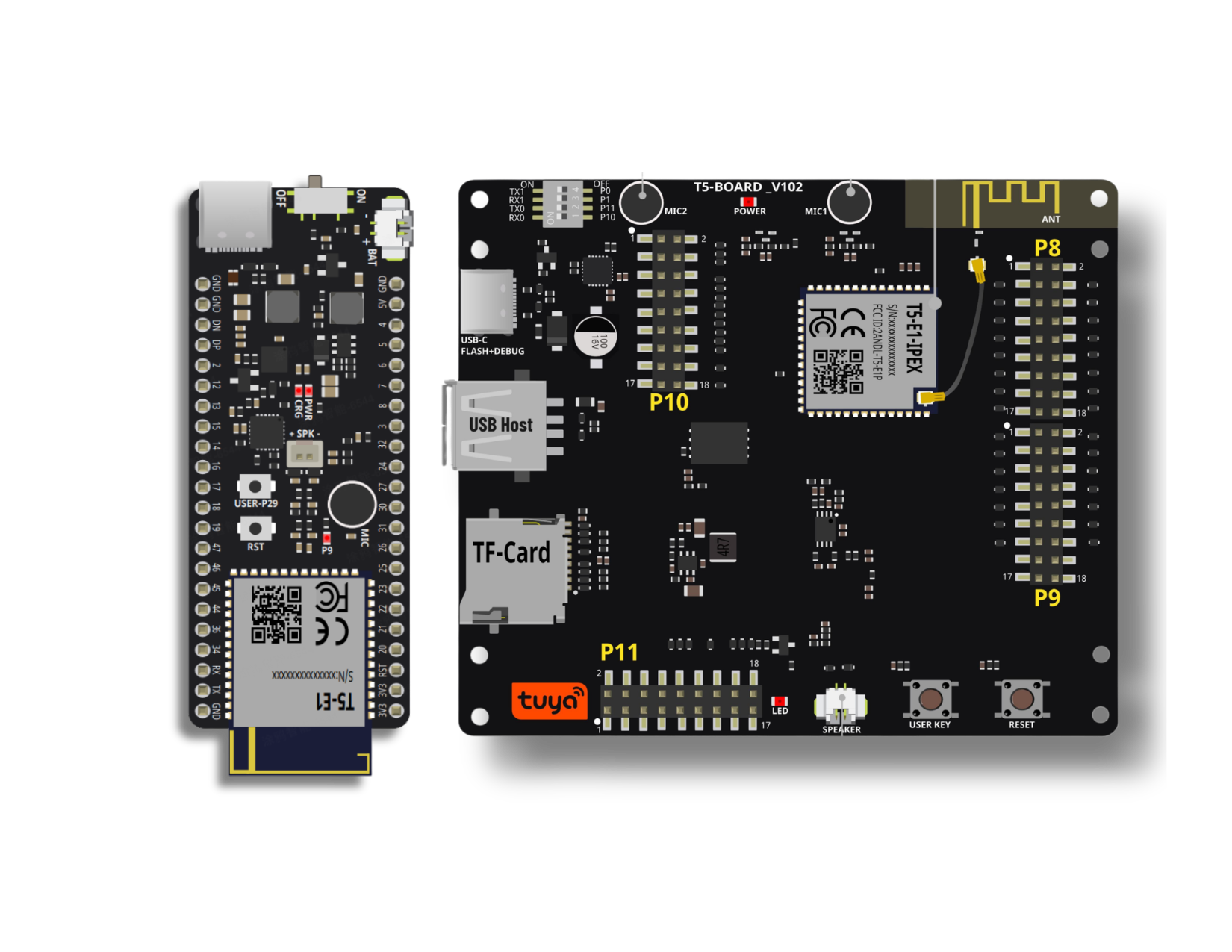

Tuya T5 chip/module is a high-performance embedded Wi-Fi 6 + Bluetooth 5.4 dual-mode communication module, embedded with ARMv8-M Star (M33F) processor and a main frequency up to 480MHz. The chip is purpose-built for multimodal AI interaction scenarios with audio, video, and display enablement. Rich GPIO resources accelerate integration, and built-in Wi-Fi 6 (2.4 GHz) plus BLE connectivity simplifies product bring-up.

Learn more →

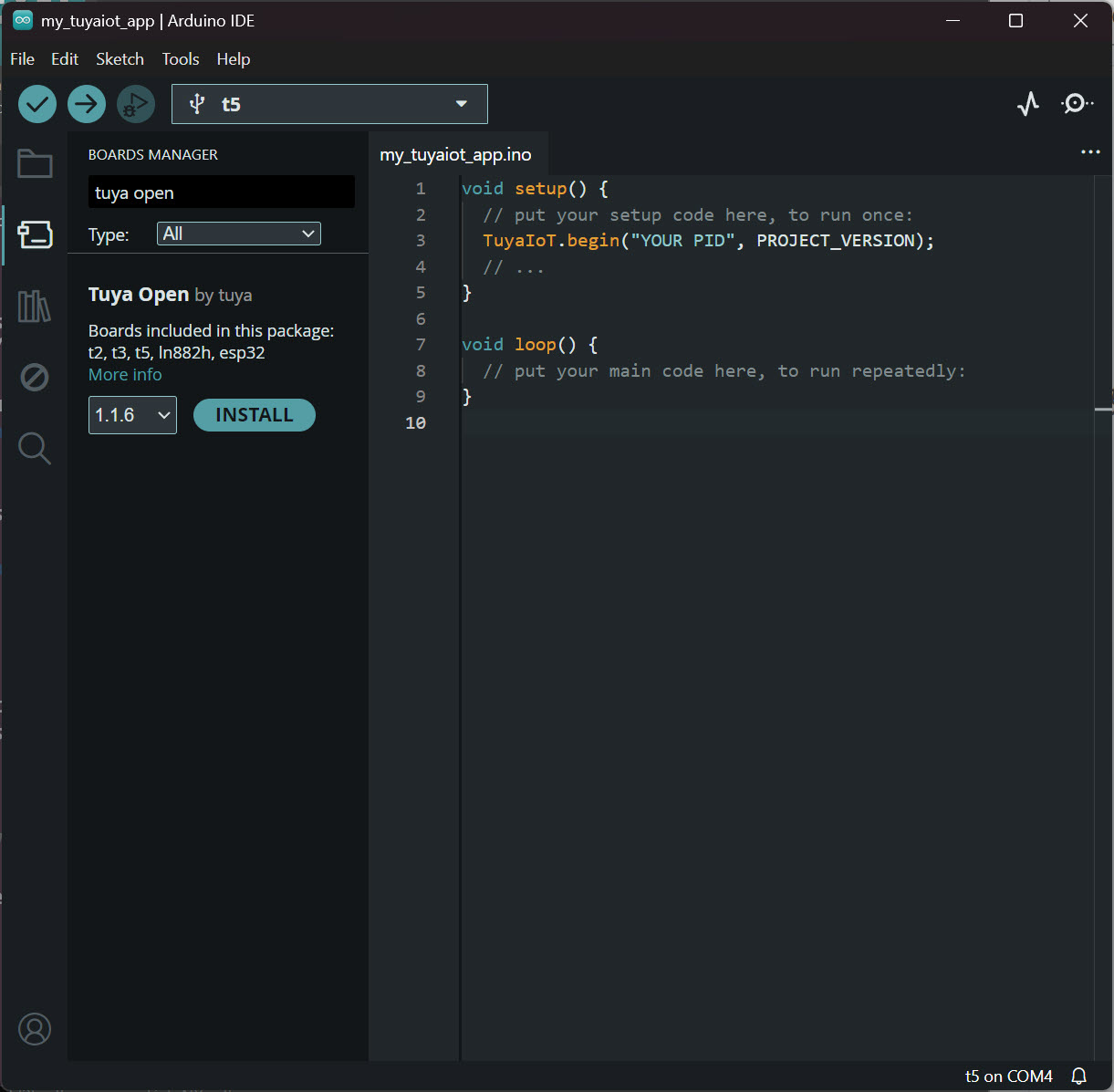

Prototype on T5 with a familiar Arduino workflow—board support and libraries integrated with TuyaOpen so you can move fast on agentic AI hardware.

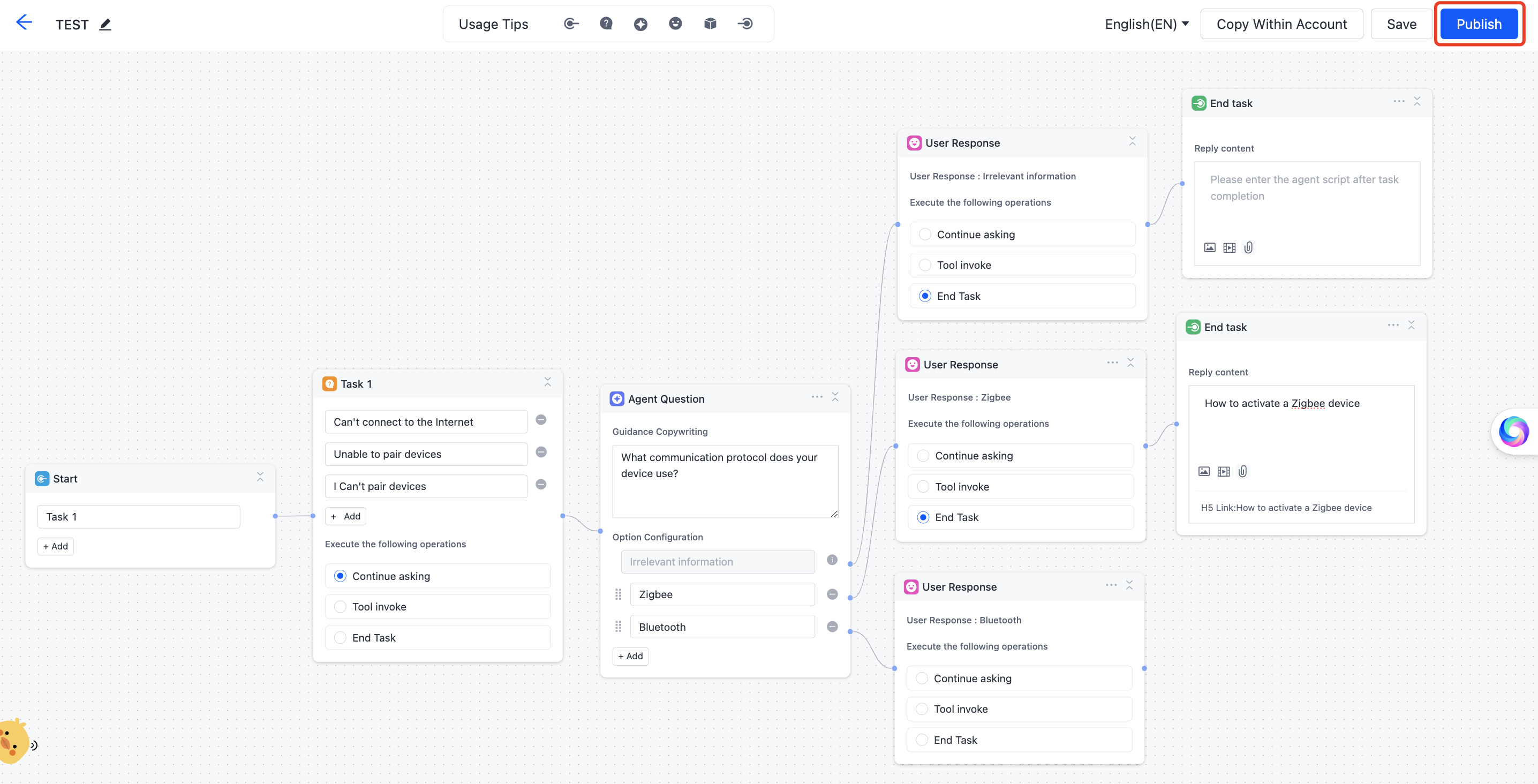

Learn more →Tuya AI: Multimodal edge-cloud intelligence with edge AI inference and a cloud agent hub, enabling access to leading AI models (DeepSeek, ChatGPT, Claude, Gemini) and cross-modal functions including voice/text interaction and image/video generation for edge devices. On the Tuya Cloud platform, you can build zero-code agents and customize IoT behaviors without rebuilding firmware.

Reference designs, products, and industry patterns with TuyaOpen: devices above, plus smart buildings, industrial IoT, and retail—IoT connectivity and agentic AI on one stack.

A desktop companion that blends expressive on-screen faces with AI listening and understanding. Rich emoji-style reactions meet gesture and voice control: speak or wave, and the display tracks smoothly for a responsive, playful presence.

HTX Studio: Bringing Smart Farming to Remote Villages

When HTX Studio stepped into remote mountain villages, they saw farmers trekking rough slopes daily to find cattle, getting hurt again and again, while regular (4G Cellular) trackers simply lost signal in blind mountain terrain. Moved to help, the team leveraged TuyaOpen open-source capabilities to integrate long-range LoRa connectivity with local-dialect AI voice interaction. They quickly built a complete smart grazing system, skipping heavy low-level bottlenecks. With ready device-cloud and AI toolchains, they turned empathy into simple, reliable smart technology for farmers deep in the mountains. Conversational AI cattle tracking makes daily interaction easier, with almost no learning curve for elderly users.

Hardware vendors, silicon partners, and developer platforms collaborating around TuyaOpen.

Star the repo, open issues or discussions, join Discord, and read the Contribution Guide. Contributions are welcome under Apache License 2.0.

Contribute on GitHub

GitHubNeed help? Ask on Issues or Discussions.

Chat with other developers on Discord.

Join Discord